Oracle 2020: A Glimpse Into the Future of Database Management - Part 1

Part 1 | Part 2

The year is 2020, and the roles and responsibilities of the Oracle professional have changed dramatically over the past 15 years. To fully understand the benefits of computer hardware in the year 2020, we must begin by seeing how the constant changes in CPU, RAM, and disk technology have effected database management over the past six decades.

Once we see the history in its correct perspective, we can understand the evolution of Oracle database systems into their current state.

A Brief History Lesson

The economics of server technology has changed radically over the past 60 years. In the 1960s, IBM dominated the server market with giant mainframe servers that cost millions of dollars. These behemoth mainframes were water-cooled and required huge operations centers and a large staff to support their operations.

- 1960s — Only the largest of corporations could afford their own data processing center, and all small- to mid-sized companies had to rent CPU cycles from a data center in order to automate their business processes.

- 1970s — Small UNIX-based servers existed, such as the PDP-11. However, they were considered far too unreliable to be used for a commercial application.

- 1980s — In 1981, the first commercial personal computer (PC) was unveiled, and practically overnight, computing power was in the hands of the masses. Software vendors rushed to develop useful products that would run on a PC, and the introduction of VisiCalc heralded the first business application outside the mainframe domain.

- 1990s — Oracle appears, and relational databases dominate the IT market. Large shops have hundreds of small UNIX-based computers for their Oracle databases.

- 2000s — Monolithic servers reappear, and Oracle shops undertake a massive server consolidation. By 2008, servers with 256 processors run hundreds of Oracle instances.

- 2010s — Disk becomes obsolete, and all Oracle database are solid-state. Hardware costs fall so much that 70 percent of the IT budget is spent on programmers and DBAs.

Largely the result of the advances of hardware technology, the Oracle professionals of the year 2020 have far different challenges than their ancestors way back in 2005.

- Large mainframe servers replaced the minicomputers of the early 21st century

- All proprietary software is accessed over the Internet

- PCs are replaced by IAs (Internet Appliances)

- High-speed network bandwidth allows instant content delivery and server-to-server communications

- The Internet becomes non-anonymous (thanks to Oracle’s Larry Ellison)

- All database systems are solid-state

- Databases become three-dimensional, allowing for temporal data presentation

It’s really important to note that all of these changes were the direct reaction to advances in hardware technology. Let’s quickly review the major advances in hardware over the past 15 years:

- 2008 — The first database server with more than 1,000 CPUs is introduced, enabling massive IT server consolidation. Dubbed “Special K” servers because they have more than 1,000 processors, these boxes allow even the largest corporation to place all of their Oracle instances on a single server.

- 2010 — The first 128-bit processors are introduced.

- 2014 — Hardware prices fall so much that they become negligible, and the bulk of the IT budget shifts to human costs.

- 2015 — Gallium Arsenide replaces silicon for RAM chips, increasing access speed into picoseconds.

- 2018 — Worldwide high-speed satellite becomes the backbone of the Internet.

- 2020 — Optical eye readers can identify your retina signature, and a quick glance is all that is required for positive identification.

These hardware changes also precipitated important social changes, and the increasing availability of computing resources led to worldwide infrastructure regulations:

- 2005 — Microsoft Office 2005 uses XML standards for MS Word documents and spreadsheets. Business documents are now sharable among all software.

- 2009 — The United Nations passes the Worldwide Internet Certification Act (WICA), requiring positive identification for Internet access.

- 2010 — The SQL-09 committee simplifies data query syntax, allowing natural language database communication.

- 2011 — W3C introduces the Verifiable Internet Protocol (VIP), requiring verifiable identity to access the Web.

- 2011 — Internic implements WICA and VIP, reducing spam and cybercrime by 95 percent worldwide.

- 2012 — Luddites protest the new lack of Internet privacy. The U.S. Congress passes the Data Privacy Act (DPA), requiring all custodians of confidential data to meet rigorous security and privacy requirements.

- 2013 — Internet bandwidth increase to allow high-speed communications between any server.

- 2018 — Internet Appliances (IA) replace personal computers, and all proprietary software is accessible only through the Internet.

- 2020 — Advertising becomes active, and retinal imaging allows for instant identification and customizing of marketing messages. Walk down the street and billboards target their content to the needs of those viewing it at that moment.

Oracle Corporation played an integral role in the movement, offering low-cost database management and capturing more than 90 percent of the database market in 2020. During the past 15 years, we see that Oracle played a major role in facilitating the new technology:

- 2008 — Oracle 14m provides inter-instance sharing of RAM resources. All Oracle instances become self-managing.

- 2010 — Oracle 16ss introduces solid-state, non-disk database management.

- 2011 — Oracle’s Larry Elision finances the Worldwide Internet Identification Database, requiring non-anonymous access and reducing cybercrime. Ellison receives the Nobel Peace Prize for his humanitarian efforts.

- 2016 — Oracle 17-3d introduces the time dimension to database management, allowing three-dimensional data representation.

- 2018 — Oracle starts manufacturing IAs for $50 each, replacing PCs and making the Internet available everywhere in the world. Ellison becomes the world’s first trillionaire.

As we see, there have been a huge number of changes over the past 15 years, but what caused them? Let’s take a closer look at how the advances in computer hardware precipitated these life-changing technologies.

Hardware Advances Between 2005 and 2020

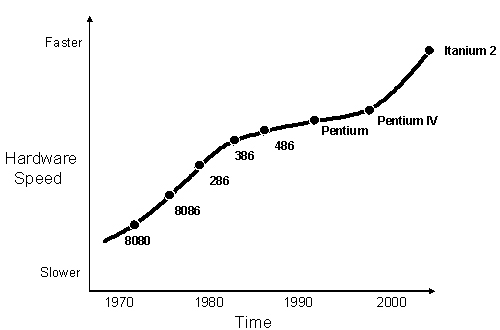

Gordon Moore, Director of the Research and Development Laboratories at Fairchild Semiconductor, published a research paper titled “Cramming More Components into Integrated Circuits” in 1965. Moore performed a linear regression on the rate of change in server processing speed and costs, and noted an exponential growth in processing power and an exponential reduction of processing costs. This discovery led to “Moore’s Law,” which postulated that CPU power gets four times faster every three years (refer to figure 1).

Figure 1: Moore’s Law.

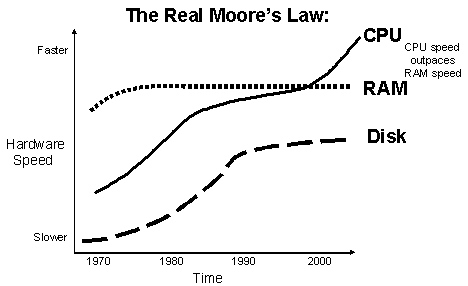

However, the “real” Moore’s Law cannot be boiled down into a one-size-fits-all statement to the effect that everything always gets faster and cheaper.

Prices are always falling, but there are important exceptions to Moore’s Law, especially with regard to disk and RAM technology (refer to figure 2):

Figure 2:The “real” Moore’s Law.

As we can see, these speed curves are not linear, and this trend has a profound impact on the performance of Oracle databases. Let’s take a closer look.

Disk Storage Changes

I’m old enough to remember when punched cards were the prominent data storage device. Every year, I would get my income tax refund check on a punched card, and we would make Christmas trees from punched cards in the “Data Processing” department.

My college kids have no idea what the term “Do not fold, spindle or mutilate” means, and they missed out on the fun of dropping their card deck on the floor and having to use the giant collating machines to re-sequence their deck.

In 1985, I remember buying a 1.2 gigabyte disk (the IBM-3380 disk) for more than $250,000. Today, you can buy 100 GB disks for $10, and 100 GB of RAM for $100. With these types of advances, Moore’s Law for storage costs indicates that:

- Disk storage costs fall 10x every year.

- Storage media is obsolesced every 25 years.

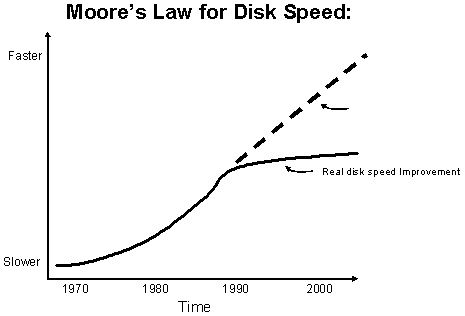

Note that the change to Moore’s Law for disks shows the limitations of the spinning platter technology (refer to figure 3).

Figure 3: Disk speed peaked in the 1990s.

Platters can spin only so fast without becoming aerodynamic, and the disk vendors were hard-pressed to keep their technology improving in speed. Their solution was to add a RAM front-end to their disk arrays, and sophisticated, asynchronous read-write software to provide the illusion of faster hardware performance.

RAM Storage Changes

Today, in 2020, you can buy 100 GB of RAM for only $100, with access times 600,000 greater than the ancient spinning disk platter of the 20th century. In 2020, a terabyte of RAM costs less than $200.

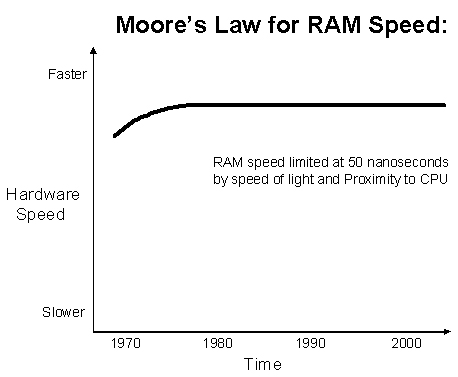

The introduction of Quantum-state Gallium Arsenide RAM in 2009 was the largest breakthrough in RAM in more then 40 years. Before 2009, RAM always became cheaper every year, but it did not get faster. This meant that CPU speed continued to outpace memory speed, and RAM subsystems had to be localized to keep the CPUs running at full capacity.

Figure 4: Silicon chips did not increase in speed.

Until 2009, RAM speed remained constant at about 20 microseconds (millionths of a second), and even the solid-state database had to deal with the continued increasing speed of CPU resources. Let’s examine the CPU changes over the past 15 years.

Processor Changes

The same trend also exists for processor costs and speed. In the 1970s, a 4-way SMP processor cost more than $3,000,000. Today, in 2020, the same CPU can be purchased for less than $300. CPUs continue to increase speed by four times as much every three years and cut cost in half.

- I/O bandwidth capacity doubles every ten years:

- 8 bit 1970s

- 16 bit 1980s

- 32 bit 1990s

- 64 bit 2000s

- 128 bit 2010s

- 256 bit 2020s

These super-cheap, super-fast processors sounded the death knell for the age of small computers, and server blades (and Oracle10g Grid computing) were replaced by large, monolithic servers.

Between 2005 and 2009, RAM had to be physically localized near the CPU to keep the processors running at full capacity.

After 2009, the speed of RAM increased to picoseconds (billionths of a second); this development changed server architectures. The largest source of latency was not the wires between the CPU and RAM, and fiber optic cables were required to keep up with the processing speeds. During this period, computer servers first began to take on the familiar tower configuration that we know today. (As we all know, the tower configuration is required to minimize the fiber optical length between the CPU and RAM, and this is required to keep the CPUs operating at full capacity.)

As RAM speed broke the picosecond threshold and approached the speed of light, even the fastest 20th century networks could not keep up with the processing demands. Quantum mechanics and atom-state technology were combined with fiber optics to improve line speeds to keep pace with the hardware.

These advances in hardware made mini-computers instantly obsolete, and management recognized that multiple servers were far too labor intensive. Starting in 2005, we began to see the first wave of the massive server consolidation movement. The large, 64-bit servers with 16, 32, and 64 CPUs became so affordable that companies abandoned their server farms in favor of a single-server source.

Conclusion

This article has shown the major changes to Oracle database technology between 2005 and 2020, and demonstrated how hardware advances preceded and facilitated the changes to Oracle.

The main points of this article include:

- RAM speed remained significantly unchanged until 32-state Gallium Arsenide technology broke the picosecond barrier.

- Solid-state RAM disks made platter disks obsolete and heralded the creation of the first solid-state Oracle architecture.

- Improvements in Internet bandwidth made it possible to have on-demand software delivery from Oracle.

In our next installment, we will consider how the Oracle DBA’s job role is far different in 2020 than it was in 2005. We will also examine the changes to Oracle software over the past 15 years and see how the changing database technology has drastically changed the duties of the Oracle DBA.

--

Donald K. Burleson is one of the world’s top Oracle Database experts with more than 20 years of full-time DBA experience. He specializes in creating database architectures for very large online databases and he has worked with some of the world’s most powerful and complex systems. A former Adjunct Professor, Don Burleson has written 15 books, published more than 100 articles in national magazines, serves as Editor-in-Chief of Oracle Internals and edits for Rampant TechPress. Don is a popular lecturer and teacher and is a frequent speaker at Oracle Openworld and other international database conferences. Don’s Web sites include DBA-Oracle, Remote-DBA, Oracle-training, remote support and remote DBA.

Contributors : Donald K. Burleson

Last modified 2006-01-05 10:02 AM